The Value of Auditing

This article is about the value of analysis and auditing skills in understanding and improving business processes and organisations, whether the organisation is in the private or government sector.

As an example from the government sector, consider a municipality. A typical municipality has hundreds of processes designed to meet the requirements of local businesses, residents and national government. Three examples process area are:

The effective management of these processes is heavily dependent on internal services provided to employees of the municipality. Potholes may be reported to the municipality in many ways; for example, over the internet by a member of the public, or by a road inspection team. The municipality maintains a database of reported potholes and their current status e.g. awaiting inspection, urgency, allocation to a team etc.

The internal provision of reliable Information Technology is therefore an essential enabler for the effective management of pothole repairs. If internal users are unable to access their database, their effectiveness is severely compromised. The same applies to the hundreds of other municipality services, and indeed to the products and services of all organisations, whatever the industry.

To resolve the technology issues of internal users, most Information Technology Divisions maintain a “Help Desk”. These deal with problems like forgotten passwords, printer failures and data corruption.

But how does an organisation know that its IT Help Desk processes are effective at meeting user requirements? One way is to conduct an internal audit.

Case study: Internal Audit of IT Help Desk in a Large Organisation

The Help Desk had six full-time-equivalent staff and a manager. It was operated as a cost centre. The Help Desk manager claimed to be unaware of any user dissatisfaction but offered his full co-operation to the appointed internal auditor.

The auditor began by requesting copies of Help Desk transaction records. These were readily available as the Help Desk had its own system, “HEDS”, which had been installed about 18 months previously. This recorded the exact “Log” date and time, in date-hours-minutes, of each new Help Desk task, and the name of the Help Desk staff member who logged it. Notification of a new task was meant to be by Email or telephone, although some nearby users would pay a personal visit to the Help Desk location.

When logged, each task was categorised by the receiving Help Desk staff member as one of six types:

- Minor development change

- Scheduled development change

- Security event

- System critical

- System request

- User urgent

Responsibility for progressing the task was with the receiving Help Desk staff member. Each time an “Action” was taken by one of the Help Desk staff, a row was added to the database against the task number, showing the date and time of the Action. In many cases, the “Action” was to pass the task to a colleague who was more able to deal with the task; this colleague then became responsible for the further progress of the task. When the task was completed, a “Close” row was added to the database, showing the date and time of the closure. All rows had a column for freeform “Comments”.

At the time of the audit, the database had 40,000 rows of entries. The Help Desk manager admitted that no-one ever looked at the database!

Quoted by W. Edwards Deming

Data are not taken for museum purposes; they are taken as a basis for doing something. If nothing is to be done with the data, then there is no use in collecting any. The ultimate purpose of taking data is to provide a basis for action or a recommendation for action.

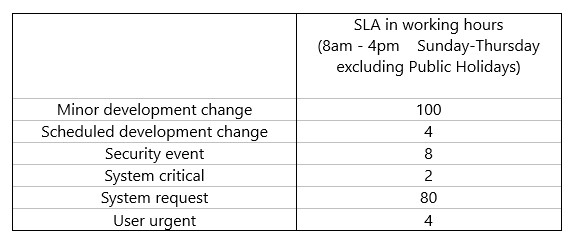

The internal auditor took an Excel copy of this database for analysis. He quickly discovered that, although most Help Desk staff and users were unaware of them, there were Service Level Agreements (SLAs) for maximum elapsed times to resolve each of the six task types:

The Help Desk manager was keen to point out that the team had voluntarily adopted an internal SLA requiring no task to await action by the currently responsible staff member for more than two working days.

There was little consistency in the allocation of task types. Users were generally eager for their problem to be allocated a task type with a quick target completion time, and some senior users were good at browbeating Help Desk staff to this end.

The internal auditor began his analysis by looking for duplicate entries in the 40,000 rows of data. He quickly found that about 1 in 8 of the rows appeared to be exact duplicates of the previous row. However, closer examination revealed a tiny difference in the date/time for these adjacent rows which was not visible when the value was displayed in date-hours-minutes format. For example, 2 consecutive rows show date-hours-minute as 22 March 2018 09.19. The unformatted actual values (in days) are 43181.3888346412 and 43181.3888348032; the difference between these 2 values is 0.0000001620 days, i.e. 0.014 seconds. It seemed highly likely that HEDS had created each duplicate row about 1/50 of a second after it created the original row. No technical reason could be found for this. The auditor wrote an Excel macro to delete all such duplicate rows from his copy of the database.

It was agreed that the most important measure to users was the deviation from SLA for a task i.e. the elapsed time in working minutes between Log and Close compared to the SLA for that task type. The next step in cleansing the data was to examine all outliers i.e. tasks with a deviation from SLA outside of mean +/- 4 standard deviations. The auditor wrote Excel macros to calculate deviation from SLA for all Closed tasks in the database and identify any tasks that qualified as outliers. These were then reviewed to check that the data was correct and not the result of a keying error.

The auditor’s analysis showed that a high percentage of tasks were not completed within their SLA, and that this percentage was worse for certain task types.

The auditor also performed an analysis of the total number of “actions” required to complete each task. There was no defined SLA for this measure. The manager believed that most tasks should be completed with just one Action. The auditor’s analysis showed this was often not the case – one task had taken 36 Actions to complete! Not surprisingly, there was a very strong correlation between number of Actions and deviation from SLA. The main reason for this was easy to identify; the internal SLA requiring no task to await action by the currently responsible staff member for more than two working days was leading to tasks being passed from one staff member to another without the problem being progressed.

The final auditor’s report included a number of specific recommendations:

- Drop the “internal SLA”

- Eliminate non-value-added handoffs

- Develop a staff skills matrix to enable each task to be allocated to a staff member who can resolve it first time

- Reconcile the skills matrix with the needs of the Help Desk and take steps to address any skills shortages

- Educate users to reduce their Help Desk needs g. remembering passwords

- Pro-actively manage the work g. produce a daily list of the most delinquent tasks

- Improve the understanding and interpretation of task types

These recommendations were all implemented. Within 3 months the Help Desk service was rated excellent in a user survey.

This is an article written by the two of our Senior Consultants with GLOMACS.

Stanton will be presenting the GLOMACS training course “Analytical and Auditing Skills” in Dubai on 16-20 September 2018.